- The Hiring Process Didn’t Break—It Was Replaced

- Executive Security Briefing: Fake Recruiters as Data-Harvesting Threats

- From Silicon to Software: Architecting Digital Transformation

- Antenna Testing, OTA Validation, RF Compliance, and ASIC Validation for Deep-Tech Systems

- Who Is an Entrepreneur?

- Design of Experiments: Engineering Yield in ASIC, RF, and Antenna Systems

- The Truth About 5G Radiation: What Science Really Says

- Signal Distortion vs. Signal Jamming: A Receiver-Centric Perspective

- Technical Product Strategy Brief

- The Leadership Challenge: Turning Bold Ideas into Market Wins

- Startup Success from Silicon to System: Product, Leadership, and Scaling

- Reducing First-Silicon Risk—from Silicon to System

- Semiconductor ASIC Life Cycle in the Context of Startup Company Challenges

- Project Risk Management — A Competitive Advantage from Silicon to System

- Design Audit: From Silicon to System, Protect Your ROI

- Engineering Confidence Into Technology Investments

- Why System-Level Thinking Determines ASIC Startup Success

- Your Product Isn’t Selling? Maybe It’s Not the Product—It’s the Market

- When Robots Think, Cities Move

- Why Statement of Work Alignment Determines Startup Execution Success

- Risk Calculation by Startup Founders: Why SoW, Audits, and Early Validation Matter

- Before You Invest: Why Most Deep-Tech Startups Fail at Product Definition

- The Path of Least Resistance in Product Design

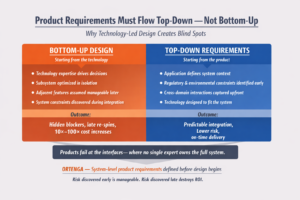

- Why Most Products Fail Before Engineering Begins

- Recruiting vs. Partnering: A Time-to-Market Decision Framework

- From Technology to Product: Choosing the Application That Matters

- Defining the Wrong Product Is the Fastest Way to Burn Capital

- Why Customers Return Products That “Passed” Validation

- Saving pennies early is one of the most expensive mistakes in product development

- Mobile Connectivity Is No Longer One Network

- Why Even Great CTOs Miss Product Requirements

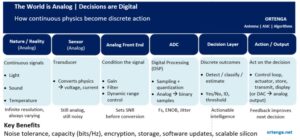

- The World Is Analog. Decisions Are Digital.

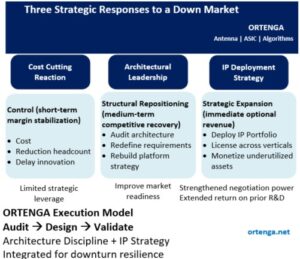

- When Markets Contract, Real Engineering Leadership Emerges

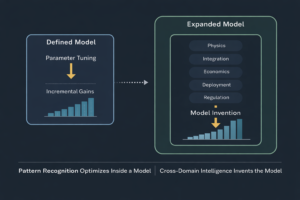

- Cross-Domain Intelligence vs. Pattern Recognition

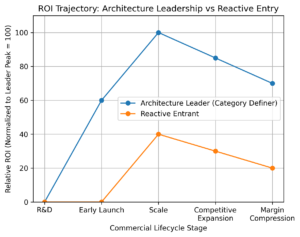

- First to Define, First to Win

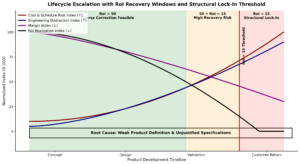

- From Concept to Customer Return: Why Weak Product Definitions Inflate Cost, Slip Schedules, and Undermine RoI

- Wireless Power Transfer: It’s Not About RF — It’s About Wavelength Discipline

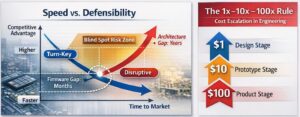

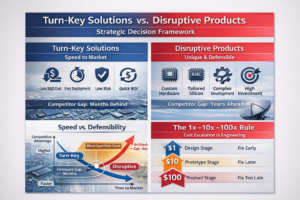

- Turn-Key or Disruptive? The Strategic Architecture Decision That Determines Market Leadership

- Technology Is Not a Product

- The Requirement That Never Got Written

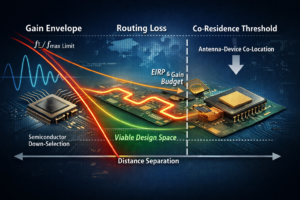

- When Gain Becomes Scarce, Wavelength Forces Antenna–Device Co-Residence

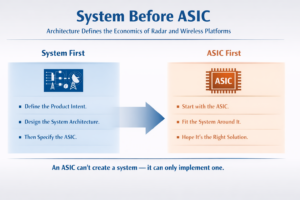

- System Before ASIC

- The Cost of Certainty

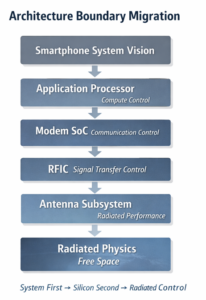

- System First, Silicon Second

- Authenticate Before You Communicate

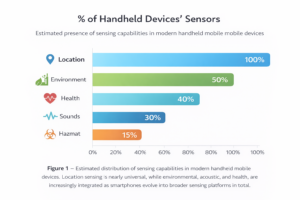

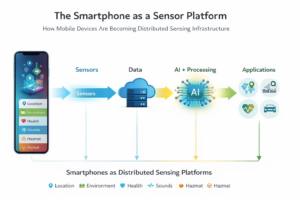

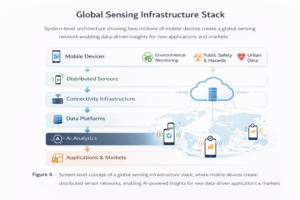

- The Smartphone as a Sensor Platform

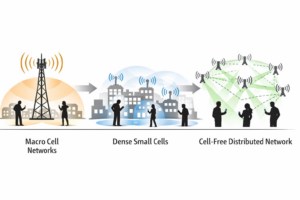

- From Small Cells to Cell-Free Networks

- Validation Is Not Testing

- When Product Mistakes Appear as Engineering Problems

- Risk as a Competitive Advantage

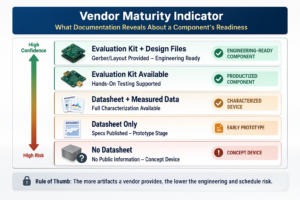

- Before You Design: A Practical Due-Diligence Process for Selecting Electrical Components

- The Hidden Cost of Skipping Product Decomposition

- How to Find the Right Application for Your Technology

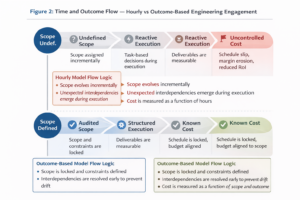

- The Hidden Cost of Hourly Engineering Engagement

- Product Success Is Defined Before Engineering Begins

- Why Deep-Technology Customer Discovery Often Fails

- Why Undefined Scope Leads to Endless Exploration

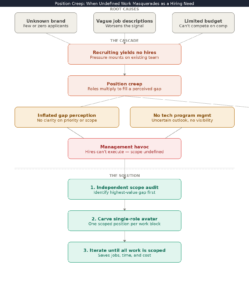

- Your Startup Isn’t Understaffed. It’s Unscoped.

- Don’t Gamble With Your Product in an Unknown Market

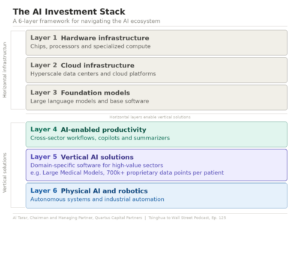

- The AI stack, mapped. The risk and opportunity in the eyes of one investor.

- What happens when you find another market for your product?

The Hiring Process Didn’t Break—It Was Replaced

Anyone actively looking for a job today has noticed a fundamental shift in the job market and recruiting process.

There are countless job postings, yet many remain open for months—or even over a year—without any apparent movement. Applicants submit resumes and hear nothing beyond an automated acknowledgment. No interview. No rejection. No closure.

Why?

When Job Postings Were Real Jobs

Not long ago, job postings described actual work that needed to be done. They outlined responsibilities, qualifications, and working conditions required to succeed. When you applied, one of two things happened:

- You were invited to interview, or

- You received a polite rejection explaining that another candidate was selected.

It might take a few weeks—occasionally a couple of months—but you knew someone reviewed your application. The position was real. The company was actively hiring.

Rejection was disappointing, but the process was transparent and human.

Today’s Reality: Advertisements, Not Open Roles

Today, job postings often function more like brand advertisements:

“Do you want to work on cool stuff?”

“Join a fast-paced, innovative environment!”

The role may exist on paper, but not necessarily in practice.

Applicants are funneled into automated systems, asked to re-enter information already present on their resumes, and required to answer generic or irrelevant questions such as “Why are you interested in this position?”—before any human interaction occurs.

A large percentage of candidates never progress beyond this stage. Many never hear back at all.

The Rise of Talent Acquisition (and Its Decline)

Traditional recruiting focused on connection:

- Understanding the candidate

- Explaining the role

- Establishing mutual value before moving forward

That model has largely been replaced by talent acquisition, which is primarily about data collection.

Candidates are evaluated virtually, using keywords, filters, and scoring models. There is minimal personal interaction, and little explanation of why a candidate is being contacted or rejected.

If you are contacted by a “talent acquisition” professional today, the interaction often begins with a request for your resume—before any meaningful discussion about how you could contribute or why the role might benefit you.

What prompted the outreach?

Usually keyword matching—not understanding.

AI Is the Next Step, Not the Exception

As digital transformation accelerates, even talent acquisition roles are becoming replaceable.

If early-stage hiring decisions are driven by:

- Keyword searches

- Automated screening

- Minimal human judgment

Then AI systems can perform the same function—faster and cheaper.

Recent layoff trends support this reality: HR and talent acquisition roles have been disproportionately impacted across many organizations.

Companies operating in a highly competitive global economy scrutinize operating expenses (OPEX). The conclusion is predictable:

- Automate what can be automated

- Outsource what doesn’t differentiate

- Minimize cost wherever possible

Hiring is no exception.

Why Some Job Postings Never Close

In many cases, managers are searching for an extremely specific combination of:

- Skills

- Experience

- Personality

- Demographics

- Budget constraints

Only the skills appear in the job posting.

Until that “perfect” candidate appears—or internal priorities change—the role remains posted, creating the illusion of active hiring when none exists.

The Exceptions That Prove the Rule

There are still cases where full-cycle recruiting exists:

- Market-dominant companies

Organizations with strong market positions can afford professional recruiters, competitive compensation, and real engagement. - Executive or VP-level hiring

These roles demand careful selection. Candidates are contacted directly, expectations are clearly communicated, and conversations are mutual and professional.

These cases are increasingly rare.

The Question That Matters

If you are currently employed, ask yourself:

How were you recruited for your current role?

Was it through a human connection—or a system?

Understanding that answer explains why today’s job market feels so different—and why job seekers must adapt their strategies accordingly.

Executive Security Briefing: Fake Recruiters as Data-Harvesting Threats

Threat Overview

Fake recruiters should be treated as a social-engineering attack vector, not a hiring activity.

Their primary objective is personal and professional data collection, not recruitment.

Once an individual responds to an unsolicited recruiter message, the attacker confirms:

- The identity is active and reachable

- The target is willing to engage

- Additional data can be incrementally extracted

This initiates progressive information harvesting.

Attack Pattern (Observed Behavior)

- Initial Contact

- Vague job opportunity

- Generic skill alignment

- No verifiable employer or role

- Engagement Trigger

- Request for confirmation of interest

- Request to move communication to WhatsApp, Telegram, or phone

- Progressive Data Collection

Over multiple messages, attackers request:- Personal phone numbers or messaging IDs

- Expanded resume details

- Employment verification framed as “next steps”

- Location, availability, travel flexibility

- Informal confirmation of current employer or role

Each data point appears benign in isolation.

Why This Matters

Aggregated personal data enables:

- Highly targeted phishing campaigns

- Executive impersonation and business-email compromise (BEC)

- Credential-harvesting attacks

- Identity fraud

- Sale of executive and engineer profiles on underground markets

In many cases, no interview, offer, or client ever exists.

The interaction continues only as long as new information can be extracted.

Risk to the Organization

- Employees become reconnaissance assets without realizing it

- Public-facing engineers and executives are high-value targets

- Attackers map internal roles, reporting lines, and expertise

- Future attacks become more credible and harder to detect

This is a low-cost, high-return threat vector for adversaries.

Defensive Guidance (Executive-Approved Policy)

Employees should:

- Treat unsolicited recruiter outreach as untrusted by default

- Avoid sharing personal contact information

- Keep communication on LinkedIn or corporate email only

- Require a live meeting before providing additional details

Security teams should:

- Include fake recruiter scenarios in phishing simulations

- Train staff on “progressive data harvesting” tactics

- Encourage reporting of suspicious recruiter outreach

- Protect executives and technical leaders as priority targets

Key Takeaway for Leadership

If a “recruiter” avoids transparency, resists live meetings, or pushes off-platform communication, this is not a hiring issue — it is a security issue.

Early disengagement prevents downstream attacks.

From Silicon to Software: Architecting Digital Transformation

System Architecture, ASIC, Antenna, and Software Engineering for Software-Defined Platforms

ORTENGA provides system-level engineering and architecture services that enable digital transformation across silicon, RF, hardware, firmware, and software.

We help software-centric companies and technology-driven enterprises reduce risk, accelerate time-to-market, and preserve margins by orchestrating custom ASIC design, antenna and RF systems, embedded firmware, and platform software under a unified architecture.

Digital Transformation Without Silicon Risk

Modern digital transformation demands more than software alone.

Advanced platforms in SATCOM, radar, and terrestrial wireless communications require tight integration between:

- Custom ASIC architecture and design

- Antenna design and development

- RF and hardware system engineering

- Firmware and embedded software

- Platform and system software

ORTENGA ensures these layers are architected together from day one, eliminating costly re-spins, yield surprises, and post-silicon delays.

What ORTENGA Delivers

- System architecture and interface definition for multi-vendor environments

- ASIC and silicon architecture consulting aligned to software requirements

- Antenna and RF design for SATCOM, radar, and wireless platforms

- Hardware and firmware development from pre-silicon to deployment

- Software-defined system integration across physical and digital layers

Industries We Support

SATCOM • Radar Systems • Wireless Communications • Software-Defined Platforms • Advanced Electronics

Why ORTENGA

Because digital transformation fails when architecture is treated as an afterthought.

ORTENGA brings cross-disciplinary engineering leadership to ensure your software strategy is grounded in correct system design, physics, and silicon realities.

→ Speak with a System Architect

Antenna Testing, OTA Validation, RF Compliance, and ASIC Validation for Deep-Tech Systems

In advanced semiconductor, RF, and space-grade systems, testing does not improve a design — it proves whether the architecture delivers what it claims.

For ASIC validation, antenna testing, RF compliance, OTA validation, SATCOM systems, and AI accelerators, test results reflect system health only against defined requirements. Pass or fail outcomes are determined by architectural decisions, electromagnetic design, and integration discipline — long before hardware enters the lab, chamber, or test range.

Testing reveals reality. It does not change it.

Domain-Specific Testing and Validation Capabilities

Deep-tech systems demand purpose-built test strategies. Generic testing is insufficient when physics, silicon limits, and regulatory constraints intersect.

ASIC Validation & Post-Silicon Testing

ORTENGA supports ASIC validation across pre-silicon assumptions and post-silicon reality:

- Feature existence and architectural behavior validation

- Timing closure, power integrity, and interface compliance

- Correlation between simulation, emulation, and silicon measurements

- Identification of architectural limitations before respins

Our ASIC validation approach ensures that claimed capabilities are measurable and defensible.

RF Testing and RF Compliance Validation

RF performance is defined as much by layout and packaging as by schematic intent. ORTENGA provides RF testing and RF compliance validation focused on real-world behavior:

- Gain, noise figure, linearity, spurious emissions

- Isolation, coupling, and EMC/EMI risk assessment

- Sensitivity to environmental and mechanical variation

- Compliance alignment with regulatory and contractual requirements

RF compliance testing is treated as a design risk management function, not a late-stage checkbox.

Antenna Testing and OTA Validation

Antenna testing and OTA (Over-The-Air) validation are critical for modern wireless, SATCOM, and phased-array systems. ORTENGA validates antenna behavior at the system level, not just in isolation.

- Radiation pattern and beamwidth measurement

- Efficiency, polarization, sidelobes, and coupling effects

- OTA validation of integrated RF + antenna systems

- Phased-array and beamforming performance under operational conditions

Our antenna testing approach connects electromagnetic measurements directly to architectural intent and system claims.

SATCOM System Testing (GEO, MEO, LEO)

For SATCOM payloads and terminals, ORTENGA supports end-to-end RF and OTA validation:

- Link budget verification and system-level performance

- Interference, jamming, and coexistence scenarios

- Mobility, dynamic beam management, and network behavior

- Alignment with commercial, defense, and space-grade requirements

AI Accelerator Validation

For AI accelerators, testing confirms that architectural claims translate into measurable outcomes:

- Performance, determinism, and efficiency validation

- Workload-specific scaling and system interaction

- Correlation between micro-architecture and observed behavior

From Architecture to Defensible Test Evidence

A system is not credible because it was tested — it is credible because the right antenna, RF, OTA, and ASIC validation tests were planned and executed.

Effective test plans are derived from:

- System architecture and electromagnetic trade studies

- Known failure modes and prior silicon or field data

- Correlation across simulation, chamber testing, OTA validation, and system measurements

Disciplined test procedures ensure:

- Repeatability and traceability

- Clear linkage between requirements and results

- Data that withstands engineering reviews, audits, and patent scrutiny

Why Late Testing Is Expensive

In ASIC, RF, antenna, and SATCOM programs, late or misaligned testing often leads to:

- Additional silicon spins or antenna re-tuning cycles

- RF compliance failures that cannot be corrected post-fabrication

- Weak or indefensible technical claims in proposals or patents

Early alignment between ASIC validation, antenna testing, OTA validation, and RF compliance strategy reduces risk and preserves capital.

Testing as a Strategic Advantage

At ORTENGA, antenna testing, OTA validation, RF compliance, and ASIC validation are integrated into system architecture and IP strategy.

We help deep-tech teams:

- Validate the existence and boundaries of critical features

- Correlate silicon, RF, and antenna measurements with design intent

- Produce defensible technical evidence for invention disclosures and patents

Whether validating a custom ASIC, RF subsystem, antenna or phased-array system, SATCOM payload, or AI accelerator, ORTENGA ensures that what you design, what you measure, and what you claim are aligned.

Partner with ORTENGA to turn testing into defensible proof — for products, programs, and patents.

Who Is an Entrepreneur?

In the high-tech industry, an entrepreneur is someone driven by deep curiosity—someone who identifies a real pain point in the market and recognizes it as a high-value opportunity.

The entrepreneur then explores viable solutions, evaluates trade-offs, and ultimately down-selects a single approach that shows a credible path to return on investment (ROI). By nature, entrepreneurs take calculated risks, committing capital, time, and reputation based on informed judgment rather than certainty.

When and if the investment materializes, the expectation is that the return delivers sufficient margin to reward all stakeholders—founders, employees, and investors alike.

For that outcome to occur, many components must align: technology execution, market timing, customer adoption, team capability, capital efficiency, and operational discipline. Coordinating and executing across these dimensions is what makes entrepreneurship both challenging and deeply engaging.

This is why we are drawn to entrepreneurial stories—not just for the success, but for the decisions, risks, and persistence behind them.

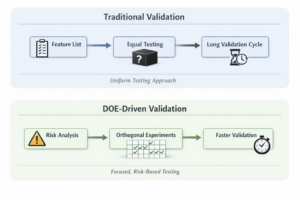

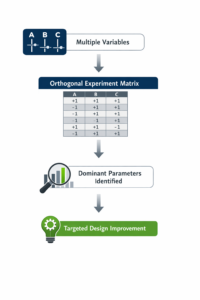

Design of Experiments: Engineering Yield in ASIC, RF, and Antenna Systems

In advanced ASIC, RF, and antenna systems, yield is engineered, not accidental.

Modern products operate at the intersection of tightly coupled electrical, physical, and process variables, where small variations can create disproportionate performance issues. Traditional trial-and-error or one-parameter-at-a-time approaches are no longer viable.

Design of Experiments (DOE) provides a data-driven framework to:

- Identify critical parameters

- Understand variable interactions

- Convert complex designs into robust, high-yield products

Tip: DOE turns complex, multi-variable systems into predictable, manufacturable products—saving time, cost, and iterations.

Historical Context: Deming & Taguchi

In the 1950s, Japanese automakers struggled with inconsistent quality and low production yield. As complexity increased, root-cause identification became slow and ineffective.

The introduction of W. Edwards Deming’s statistical thinking and Genichi Taguchi’s orthogonal DOE methods transformed manufacturing into systematic engineering. These principles—controlling variation, identifying dominant factors, and designing for robustness—now underpin semiconductor, RF, and antenna manufacturing at scale.

How ORTENGA Can Help

ORTENGA works with engineering teams facing these exact challenges. Whether the issue appears as low wafer yield, RF performance spread, or inconsistent OTA results, ORTENGA applies structured DOE methodologies across silicon, RF, and antenna domains.

By correlating measured data with process conditions, design parameters, and test outcomes, ORTENGA identifies the critical contributors to variability and provides clear, actionable recommendations:

✅ What to adjust

✅ What to tighten

✅ What to leave unconstrained

Callout: Faster root-cause isolation, fewer iterations, and engineering decisions driven by data—not assumptions.

Real-World Examples

1️⃣ Wafer Yield in Advanced ASIC Manufacturing

Challenge: Early silicon often exhibits parametric variation, leading to wafer yields of 30–50%.

Critical factors: lithography focus, gate length, spacer dimensions, etch bias.

DOE solution: ORTENGA identifies dominant process variables, enabling targeted adjustments that can improve wafer yield to over 90% without a full process re-spin.

2️⃣ RF Power Amplifier (PA) Efficiency

Challenge: Lot-to-lot variation affects output power, linearity, and thermal margins.

DOE solution: DOE across transistor geometry, matching networks, bias conditions, and substrate properties uncovers root causes of efficiency spread.

⚡ Impact: Correcting dominant factors can reduce efficiency spread by over 50%, enabling predictable RF performance.

3️⃣ Antenna OTA Performance Spread

Challenge: Devices may pass conducted RF tests but fail OTA validation due to TRP and TIS variability.

DOE solution: DOE evaluates antenna geometry, PCB stack-up, ground clearance, and enclosure tolerances.

Result: Mechanical tolerances were often the dominant source of OTA spread. DOE-driven adjustments reduce variation and ensure repeatable OTA compliance across production volumes.

DOE as a Competitive Advantage

Across wafer fabrication, RF ICs, and antenna systems, DOE allows teams to:

- ✅ Identify critical process parameters (CPPs) quickly

- ✅ Reduce performance spread without over-constraining the process

- ✅ Achieve Six Sigma-level robustness with fewer iterations

Callout: DOE is more than a statistical tool—it is a force multiplier. Organizations that master DOE with ORTENGA ramp faster, yield higher, and ship with confidence.

The Truth About 5G Radiation: What Science Really Says

5G Health Concerns

ORTENGA has received many inquiries regarding potential health effects of 5G technology. While ORTENGA does not provide consulting on biological effects of electromagnetic waves, we share information to help consumers and clients make informed decisions.

What We Don’t Know

Since the 1970s, numerous studies have investigated the impact of electromagnetic fields (EMF) on human tissues. No definitive scientific conclusion has been reached. Nevertheless, equipment exists to systematically and scientifically study cause-and-effect relationships.

What We Know

Human body response to electromagnetic fields depends on:

- Operating frequency

- Field strength

- Tissue type (soft vs. hard tissue)

Safety assessments must consider all of these factors to be meaningful. Statistically, most individuals respond similarly under controlled conditions, though responses may vary by age, gender, and other factors.

Frequency of Operations

Radio waves cover a huge spectrum—from kHz (AM radio) to mmWave (24–60 GHz) used in 5G and advanced Wi-Fi standards. The body and devices respond differently across this range. Let’s look at some key examples:

- MRI Imaging

- Operating frequency: 42–63 MHz (1–1.5 Tesla)

- Purpose: Non-invasive imaging of soft tissues

- Observation: Negligible impact if the body remains still; movement can generate eddy currents, causing discomfort.

- Takeaway: Focused EMF can be safe for controlled diagnostic use.

- Wearable Sensors (802.15.6)

- Operating frequency: 20 MHz – 2.4 GHz

- Purpose: Body-worn health monitoring

- Observation: Lower frequencies couple more efficiently to the body, intentionally designed for safe measurement.

- Cellular Phones (1–2 GHz)

- Regulated power: < 1.6 W/kg (FCC)

- Typical phone transmit power: ~0.01–0.25 W

- Observation: Even at maximum power, radiation is below regulatory limits. Bystanders receive much lower exposure than the user.

- Takeaway: Non-users nearby are statistically exposed to extremely low EMF levels.

- Hyperthermia (Cancer Treatment)

- Operating frequency: 100–900 MHz

- Purpose: Non-invasive tumor treatment by heating tissue to ~44°C

- Observation: Frequency and power are chosen to target tumors specifically while sparing surrounding tissue.

- 5G mmWave (24–39 GHz)

- Observation:

- mmWave experiences high atmospheric loss, so transmitted power dissipates quickly.

- Beamforming directs energy precisely at the intended user.

- Standby or nearby individuals are minimally exposed.

- Takeaway: Unlike legacy 1G–4G networks, 5G transmission is highly localized, reducing incidental exposure.

Key Insights

- MRI, hyperthermia, and wearables operate below 1 GHz where body interaction is intended.

- Higher frequencies (mmWave) have low tissue penetration and significant air loss, further limiting exposure.

- 5G’s beamforming technology focuses energy on users, unlike older networks that radiate broadly.

Can We Say 5G is Safe?

Based on the evidence and comparison to previous generations:

- 5G is not inherently less safe than 4G/LTE, 3G/CDMA, 2G/GSM, or 1G/AMPS, when used appropriately.

- If you have concerns about mobile communications in general, additional research is encouraged.

About ORTENGA

ORTENGA is an elite engineering network specializing in wireless systems, antenna design, ASIC development, and algorithm solutions. The organization helps clients—from startups to global enterprises—complete complex technical Statements of Work on budget, on schedule, and with technical precision by providing access to top‑tier subject‑matter experts who translate business requirements into practical technical solutions.

ORTENGA‘s engineering expertise spans multiple industries including Autonomous Automotive, SATCOM, Radar, Smart City, Wi‑Fi, Mobile Terrestrial Radio Communications, and next‑generation 6G technologies.

Rather than serving as a traditional staffing agency, ORTENGA’s model scales engineering talent and technical leadership to meet the needs of each unique project, helping partners rapidly staff teams and accelerate product development with clear scoping, scheduling, and budgeting.

For inquiries or more information, ORTENGA can be contacted via info@ortenga.net.

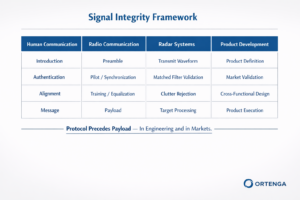

Signal Distortion vs. Signal Jamming: A Receiver-Centric Perspective

Subtitle: Why Receiver Nonlinearity, Dynamic Range, and Intent Matter More Than Interference Alone

Signal distortion originates within the receiver system itself.

Every receiver has a finite dynamic range. When the desired incoming signal exceeds the receiver’s upper linear limit, the front end and/or subsequent stages introduce distortion due to inherent nonlinearities (e.g., compression, intermodulation, desensitization).

Signal distortion is typically unintentional and arises as a byproduct of receiver limitations, design tradeoffs, or operating conditions. Importantly, even when distortion is present, a receiver may still detect, demodulate, and interpret the underlying information—albeit with degraded performance.

Signal jamming, by contrast, is an intentional act in which a transmitter deliberately renders a receiver unusable or unreliable. The objective is not merely to distort the signal, but to deny communication, navigation, or sensing capability.

A common jamming approach is brute-force jamming, where high-power interference drives the receiver into severe distortion or saturation beyond its tolerance limits. In this case, jamming is achieved by inducing distortion.

However, signal jamming does not require overwhelming the receiver. More sophisticated techniques—such as deceptive, protocol-aware, or waveform-matched jamming—can disrupt receiver operation within its nominal dynamic range, without obvious overload or compression effects.

Key Distinction

- Distortion is a receiver-intrinsic phenomenon and often unavoidable.

- Jamming is an externally imposed, intentional action designed to exploit or exceed receiver vulnerabilities.

The distinction is subtle, but for the trained eye, the difference lies in intent, mechanism, and observable system behavior rather than in signal impairment alone.

Technical Product Strategy Brief

![]()

From Silicon to System for Semiconductor, RF, Radar, SATCOM, and AI Hardware Startups

Objective

Enable deep-tech startups to convert silicon-, RF-, radar-, or algorithm-level innovation into a deployable, scalable, and revenue-generating system product, while managing risk, cost, manufacturability, and time-to-market.

The Industry-Specific Challenge

Semiconductor, RF, Radar, SATCOM, and AI hardware startups face a common problem:

They prove the core technology, but fail at productization.

Typical failure points include:

- Optimizing silicon, RF, or radar subsystem performance without system-level cost and integration awareness

- Underestimating antenna, packaging, thermal, and power constraints

- Late discovery of certification, compliance, or deployment issues

- Misalignment between hardware capability and software/algorithm readiness

In these domains, system limitations—not silicon performance—often define product success.

Silicon-to-System Product Strategy Framework

- System-Level Market Definition (Not Chip-Level)

Product strategy must begin with the end system, not the IC, radar module, RF block, or model.

Examples by domain:

- Semiconductor: Edge device, accelerator card, module, or SoC-based platform

- RF / mmWave: Integrated radio, front-end module, or complete RF subsystem

- Radar: Sensor module, phased array radar, automotive or aerospace system

- SATCOM (GEO/LEO): Terminal, payload component, phased array, or end-user system

- AI Hardware: Standalone accelerator, embedded edge system, or data-center module

Key questions:

- What system metric defines value? (Throughput, latency, EIRP, SWaP-C, detection range, TOPS/W, etc.)

- Who buys the product—and who integrates it?

- What legacy system must be displaced?

Output: System-level requirements that drive all technical decisions.

- Opportunity Filtering Across Use Cases

Most technologies enable multiple markets:

- Commercial vs. defense

- Edge vs. infrastructure

- Ground vs. airborne vs. space

Each opportunity must be evaluated for:

- System complexity

- Certification and regulatory burden

- Time-to-market

- Capital intensity

Common mistake: Chasing the largest market instead of the fastest achievable product.

Output: Ranked product roadmap based on risk-adjusted return.

- Silicon and Architecture Alignment

Silicon and radar system decisions must reflect system and lifecycle realities:

- Process node vs. yield, cost, and radiation tolerance (for SATCOM or radar)

- Integration vs. chiplet/modular approaches

- Analog, RF, radar, and digital partitioning

- Testability, calibration, and field updates

Typical failure: Over-optimizing peak performance while ignoring manufacturability and system margin.

Output: Architecture aligned with product volume, deployment model, and upgrade path.

- RF, Radar, Antenna, and Packaging Co-Design

For RF, radar, mmWave, and SATCOM systems, system performance is dominated by:

- Antenna and radar array architecture and placement

- Package and interconnect loss

- Thermal management

- Mechanical and form-factor constraints

- EMI/EMC and regulatory compliance

Late-stage RF or radar fixes are expensive and often ineffective.

Output: Early co-design of radar, antenna, RFIC, package, and mechanical enclosure.

- Algorithms, Firmware, and Software Synchronization

For AI, radar, and advanced RF systems:

- Algorithms must tolerate real-world impairments

- Firmware must support calibration, yield variation, and updates

- Software defines customer-visible differentiation

Common failure: Hardware readiness without production-quality software.

Output: A synchronized hardware–software–algorithm release roadmap.

- Manufacturability, Deployment, and Scale

A lab prototype is not a product.

Key considerations:

- DFM / DFT

- Yield sensitivity and margin

- Supply-chain readiness

- Certification timelines (FCC, CE, DO-160, space qualification, safety)

- Field deployment and support model

Output: A system that can be built, certified, shipped, and supported at scale.

Organizational Risk: The Single-Discipline Leadership Trap

Most deep-tech startups are led by:

- Exceptional technologists, or

- Strong business strategists

Rarely both.

This leads to products that are either:

- Technically superior but commercially misaligned, or

- Market-driven but architecturally weak

Successful productization requires cross-disciplinary, system-level leadership.

ORTENGA’s Role — From Silicon to System

ORTENGA provides on-demand access to senior, multi-disciplinary engineering expertise across:

- Radar, antenna, and RF systems

- ASIC and semiconductor architecture

- Algorithms and signal processing

- Hardware, firmware, and full system integration

ORTENGA helps startups:

- Select the right product opportunity early

- Align silicon, RF, radar, and system decisions

- Reduce late-stage redesign risk

- Shorten time-to-market

- Preserve capital while scaling capability

Learn more at: https://ortenga.net/

Outcome

Startups that adopt a silicon-to-system product strategy:

- Reduce technical and market risk

- Avoid costly pivots

- Deliver differentiated, deployable systems

- Accelerate the path to revenue and scale

ORTENGA turns deep technology into real-world systems—and systems into products.

The Leadership Challenge: Turning Bold Ideas into Market Wins

Picking the right product is just the first step. The real challenge? Having engineering leadership that truly believes in your technology.

In a startup, the CTO or VP of Engineering isn’t just a manager—they are the first believer in your product. Anything less—half-hearted commitment, slow decisions, or lukewarm support—spreads through the team, slowing development and jeopardizing your first-mover advantage.

Startups can’t afford the luxury of large portfolios or endless resources. Speed, focus, and bold execution are survival skills. Every decision matters; every delay risks your ROI.

Engineering leaders must:

- Actively embody the founder’s vision, not just echo it.

- Make rapid, high-impact decisions that keep your product moving.

- Lead teams with clarity, commitment, and transparency.

At ORTENGA, we help startups turn disruptive ideas into market-ready products. From Silicon to System, our elite network of antenna, ASIC, and algorithm engineers accelerates your development timeline, reduces risk, and ensures your innovation reaches the market first and strong.

Startup Success from Silicon to System: Product, Leadership, and Scaling

High-tech startups face a series of critical hurdles before they can succeed and deliver returns to investors. While the first two challenges test a company’s product strategy and technical leadership, the third challenge—scaling—often determines whether a startup thrives or fails.

Challenge 1: Picking the Right Product

Every deep-tech startup begins with innovation—silicon, RF, radar, SATCOM, or AI hardware—but not every technology becomes a marketable product. The first challenge is selecting the opportunity with the highest potential return.

Many startups fail at this stage because they optimize individual subsystems without system-level awareness. Common pitfalls include:

- Over-focusing on silicon, RF, or radar performance without considering cost, manufacturability, or integration constraints.

- Underestimating antenna, packaging, thermal, and power challenges.

- Misaligning hardware capabilities with software or algorithm readiness.

A sound product strategy starts from the end system, not just the chip or module. Early identification of system-level metrics, market fit, and integration requirements reduces risk and preserves resources.

Challenge 2: Committed CTO or VP of Engineering Leadership

Picking the right product is only the first step. Success requires a committed technical leader—a CTO or VP of Engineering—who embodies the founder’s vision and drives execution.

Engineering leadership in startups must:

- Believe deeply in the product and lead by example.

- Make rapid, high-impact decisions that keep development moving.

- Provide clarity, focus, and transparency to the team.

Without strong technical leadership, even the best technology can stall, delay time-to-market, or lose its first-mover advantage.

Challenge 3: Scaling the Business

Once the product is defined and leadership is committed, startups face the most decisive hurdle: scaling intelligently.

Premature scaling is a common trap: assuming wide market acceptance without supporting data, over-forecasting production volumes, or diverting scarce engineering resources to unnecessary projects. Consequences include:

- Wasted cash and limited resources.

- Delayed product development.

- Lost time—an irreplaceable resource.

Even industry leaders like Apple demonstrate the importance of direction over speed, using market data and measured commitments to scale efficiently.

How ORTENGA Helps Startups Succeed

ORTENGA partners with startups to navigate these three critical challenges:

- Market Analysis & Product Strategy: Identify system-level opportunities and rank product roadmaps by risk-adjusted return.

- Technical Leadership & Execution: Align cross-disciplinary engineering decisions across ASIC, RF, radar, antenna, algorithms, hardware, firmware, and software.

- Measured Scaling & Deployment: Reduce late-stage redesign risk, shorten time-to-market, and preserve capital while scaling capability.

With ORTENGA, startups can turn innovation into deployable systems, align technical and business strategy, and scale confidently without risking resources or momentum.

Reducing First-Silicon Risk—from Silicon to System

![]()

The Challenge

First silicon failure is one of the most expensive risks in hardware development. Re-spins increase cost, delay schedules, and can eliminate a product’s market opportunity entirely.

Common Causes

- Unclear or incomplete use-case definition

- Specifications not fully locked before design execution

- Gaps between specifications and implementation

- Lack of independent, experienced design review

These issues affect not only ASICs, but also antennas, RF subsystems, and system-level integrations.

The ORTENGA Advantage

ORTENGA provides an elite network of seasoned ASIC, antenna, and algorithm engineers who collaborate across disciplines to address risk early—before tape-out.

Our engineers work from Silicon to System, ensuring:

- Designs are grounded in real system use cases

- Specifications are validated and stable

- Implementation aligns with performance targets

- Independent audits identify issues early

The Result

- Reduced re-spin risk

- Controlled development cost

- Improved likelihood of first-silicon success

- Faster time to market

Semiconductor ASIC Life Cycle in the Context of Startup Company Challenges

From Concept to Scale — Silicon to System

High-tech semiconductor startups face three decisive challenges on the path from innovation to sustainable returns. The ASIC life cycle cuts across all three.

Startup Challenge #1: Product Definition — Choosing the Right ASIC

The first challenge is deciding what to build.

New technologies often support multiple potential products, each with different markets, system requirements, development costs, and time-to-market. With limited capital and resources, startups must select one or two ASIC opportunities that can realistically reach market traction before funding is exhausted.

Many ASIC failures originate here—when silicon capabilities are defined in isolation, without sufficient system-level and market validation.

ORTENGA’s role (Silicon to System):

- Translate system requirements into ASIC feature sets

- Evaluate multiple product paths and their market viability

- Align silicon architecture with real customer use cases

Startup Challenge #2: Execution — Building the Right ASIC the Right Way

The second challenge is execution.

Once the ASIC direction is selected, success depends on disciplined engineering leadership, realistic schedules, and design decisions made with productization in mind. Poor execution, over-engineering, or lack of ownership can delay tape-out, increase cost, and miss market windows.

At this stage, engineering teams must balance innovation with manufacturability, testability, power, cost, and system integration.

ORTENGA’s role (Silicon to System):

- Provide seasoned ASIC, algorithm, and system engineers

- Architect ASICs with clear performance, power, and cost targets

- Reduce execution risk through cross-disciplinary collaboration

Startup Challenge #3: Scaling — Surviving the Two-Year ASIC Life Cycle

The third and most decisive challenge is scaling.

A semiconductor ASIC typically has a two-year effective market life cycle. A successful ASIC must return its full investment within this window. After that, the product must be enhanced or re-architected—adding features, improving speed or power, reducing cost, or shrinking size.

If a successful ASIC is not upgraded, competitors will replicate functionality and erode market share.

If an ASIC fails to gain traction, it becomes obsolete and never returns the investment.

Scaling too early—based on assumptions rather than market data—often leads to excess inventory, wasted capital, and additional fundraising pressure.

ORTENGA’s role (Silicon to System):

- Define upgrade and next-generation ASIC roadmaps

- Assess competitive and market landscapes before scaling

- Enable capital-efficient scaling aligned with real demand

Why Silicon to System Matters

Across all three startup challenges, failures are rarely due to silicon alone. They result from misalignment between market needs, system architecture, and ASIC execution.

ORTENGA integrates market insight, system thinking, and silicon expertise to help semiconductor startups:

- Make the right product decisions early

- Execute ASIC development with discipline and speed

- Scale only when data—not optimism—supports it

From Silicon to System, ORTENGA helps startups turn ASIC innovation into sustainable business success.

Project Risk Management — A Competitive Advantage from Silicon to System

In highly regulated, safety-critical markets such as automotive, aerospace, radar, and SATCOM, technical risk is not just a project concern—it is a business and compliance risk.

Advanced programs that push beyond existing technology face compounded risks across silicon design, RF performance, hardware integration, firmware, software, system validation, and certification. Left unmanaged, these risks lead to schedule slips, redesign cycles, certification delays, and capital inefficiencies.

Each risk, however, represents an opportunity for differentiation when managed correctly.

A Silicon to System approach enables early identification and mitigation of risks across the full technology stack—from ASIC architecture and algorithms through antennas, RF, hardware, firmware, software, and end-to-end system integration. This holistic visibility reduces late-stage surprises, shortens qualification and certification cycles, and improves first-pass success.

Partner with ORTENGA for the design and development of Automotive, Aerospace, Radar, and SATCOM systems where predictability, compliance, and reliability define competitive advantage.

ORTENGA’s seasoned, cross-disciplinary engineering teams systematically reduce technical and integration risk—helping customers meet regulatory requirements faster, protect investment capital, and accelerate time-to-market by delivering solutions that transition smoothly from silicon to certified, deployed systems.

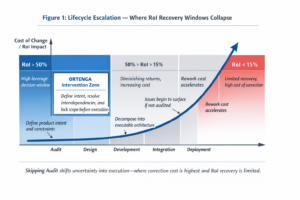

Design Audit: From Silicon to System, Protect Your ROI

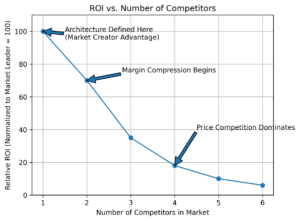

In product development, the 1x–10x–100x rule is clear:

- 1x – Fix an issue during design

- 10x – Fix during development

- 100x – Fix after production

Investing in early design validation is the smartest way to protect ROI, time to market, and engineering resources.

Independent Design Validation

At ORTENGA, we partner with clients to audit their product designs independently. Our elite network of Antenna, ASIC, Hardware, Firmware, and Algorithm engineers provides a fresh perspective on your architecture and system design.

The goal of the audit is simple:

- Identify hidden risks, corner cases, and assumptions missed by the design team

- Validate simulations, documentation, and system interactions

- Reduce the likelihood of costly rework during development and production

By having an objective, independent review, you gain confidence in your design before committing resources.

Why It Matters

Consider ASIC development as an example:

- Tape-out at advanced nodes (e.g., 2nm CMOS) can exceed $50M

- Individual wafers may cost $50K

- Hardware re-spins add 3–9 months of delay

- Firmware and software often require ripple fixes after ASIC changes

A design audit mitigates these risks, ensuring that every dollar invested in validation saves multiples downstream—often 10x during development or 100x in production.

ORTENGA Advantage

Our engineers are seasoned professionals who collaborate seamlessly across disciplines, regardless of geography. We provide actionable insights without duplicating your design efforts, allowing your team to focus on execution and innovation.

Partner with ORTENGA to:

- Validate your design from silicon to system

- Minimize risk and cost

- Accelerate time to market

- Maximize ROI

Every dollar spent on a design audit with ORTENGA is an investment in certainty, speed, and success.

Engineering Confidence Into Technology Investments

Silicon-to-System Risk Reduction for Automotive, Aerospace, SATCOM, and Radar

High-technology startups face a predictable sequence of challenges. Most failures occur not because of lack of innovation, but due to misaligned technology, unclear product definition, and premature scaling. ORTENGA partners with investors and technology leaders to reduce these risks across all three critical startup challenges—from concept to scale.

1st Challenge – Technology and Product Viability

The first and most fundamental challenge is proving that a technology can become a real, standards-compliant product that the market needs.

ORTENGA helps investors and founders:

- Evaluate materials, architectures, and core technologies as true product enablers

- Define clear use cases and system requirements

- Perform Silicon-to-System feasibility studies to validate performance, cost, power, size, and manufacturability

- Identify early technical and regulatory risks before capital is deployed

Materials often unlock entirely new product families.

For example:

- PolyStrata® enables a new class of low-loss, lightweight antenna and RF front-end subsystems that were previously impractical at scale.

- WavePro™ enables highly integrated antenna and RF front-end solutions for compact, low-weight systems while remaining cost-feasible.

These technologies only become investable when validated at the system and product level, not as isolated innovations.

2nd Challenge – Execution and First-Silicon Success

Once viability is established, the second challenge is executing correctly the first time—where many startups underestimate complexity.

ORTENGA reduces execution risk by:

- Translating product definition into locked specifications

- Aligning antenna, ASIC, algorithm, hardware, firmware, and software development

- Ensuring first-silicon and first-article success, avoiding costly redesigns and schedule slips

- Designing with standards compliance and certification in mind from the outset

This phase protects investor capital by preventing re-spins, missed market windows, and escalating burn rates.

3rd Challenge – Scaling Without Destroying Value

The third—and most decisive—challenge is scaling.

Many startups fail by scaling too early, over-forecasting demand, or investing in inventory and infrastructure without validated market data.

ORTENGA supports disciplined scaling by:

- Validating market readiness and system performance at volume

- Ensuring architectures scale in cost, supply chain, and manufacturing

- Avoiding over-customization that limits market adoption

- Supporting platform-based product evolution rather than one-off designs

Scaling becomes a controlled growth phase, not a financial gamble.

Partner with ORTENGA

ORTENGA advises technology investors and engineering leaders across all three startup challenges—ensuring that innovation is technically sound, executable, and scalable.

Partner with ORTENGA for Architecture, Antenna, ASIC, Algorithm, and Silicon-to-System design and development, and turn high-risk technology investments into defensible, standards-compliant, and scalable products.

Why System-Level Thinking Determines ASIC Startup Success

Startup Challenge #3: Where Vision and Engineering Leadership Become Silicon

Successful ASIC startups are rarely defined by better silicon alone. They are defined by stronger system-level decisions made long before the first design line is written. In industries such as automotive, aerospace, radar, and SATCOM, where performance, reliability, and regulatory compliance are critical, early system-level thinking is non-negotiable. A single misaligned specification can cascade through development, resulting in failed first silicon, delayed certification, or lost market windows—costs that can be 10× to 100× higher than early design investment.

The Foundation: Vision and Use Cases (Startup Challenge #1)

Startup Challenge #1—defining a clear product vision and validated use cases—directly informs ASIC specifications. Without a precise understanding of the application environment—whether it’s high-reliability radar for defense, autonomous driving systems in automotive, or SATCOM payloads with strict thermal and power budgets—even technically excellent ASICs risk being unusable. Every system has corner cases, integration constraints, and environmental stressors that must be considered; missing them is rarely recoverable after tape-out. A well-defined vision ensures that specifications address real problems for real users, not hypothetical scenarios.

The Gatekeeper: Engineering Leadership (Startup Challenge #2)

Even with a strong vision, specifications fail without disciplined, accountable engineering leadership. Startup Challenge #2 is about having a CTO or Head of Engineering who:

- Personally believes in the product

- Owns trade-offs across power, performance, cost, and compliance

- Challenges assumptions instead of simply collecting requirements

- Is accountable for the final design decisions

Without strong leadership, specifications drift under schedule pressure, critical assumptions go unchallenged, and documented requirements become non-defensible. In regulated industries like automotive or aerospace, this can prevent certification, compromise safety, or create unresolvable integration issues.

Translating Vision and Leadership into ASIC Specifications (Startup Challenge #3)

Startup Challenge #3 is where vision and leadership converge into measurable, deployable specifications. Modern system companies increasingly expect system-ready, modular ASICs, not standalone silicon. ORTENGA helps startups define specifications within the context of the full system, reducing risk and accelerating time-to-market.

In radar and SATCOM systems, this means integrating antenna performance, signal processing, and RF chain requirements into a single silicon module. In automotive applications, it includes latency, redundancy, and functional safety constraints. By approaching ASIC design from a system perspective, ORTENGA ensures first silicon is deployable, verifiable, and aligned with end-user expectations, reducing both technical and commercial risk.

Startups typically define ASIC specifications using one of four approaches:

- Customer-driven requirements

- Copying a perceived equivalent ASIC

- Enhancing an existing ASIC’s specs

- Top-down system-to-silicon derivation

Only the fourth approach, when paired with clear vision and accountable leadership, consistently leads to scalable, market-aligned products.

Key Takeaways

Success in ASIC startups is rarely about better silicon—it’s about making the right system-level decisions early.

- Challenge #1: Vision and Use Cases ensures your product solves the correct problem under realistic conditions.

- Challenge #2: Engineering Leadership translates that vision into disciplined, defensible requirements.

- Challenge #3: ASIC Specifications solidify those decisions into measurable, verifiable silicon that meets both market and system demands.

In high-stakes industries like automotive, aerospace, radar, and SATCOM, integrating system awareness into every specification decision reduces risk, protects market windows, and maximizes ROI. Partnering with ORTENGA ensures your startup bridges the gap from concept to deployable system, avoiding the costly pitfalls that sink many first-time ASIC efforts.

Your Product Isn’t Selling? Maybe It’s Not the Product—It’s the Market.

You’ve spent years and millions developing a product, but the market isn’t responding. In Automotive, Radar, SATCOM, and Radio Communications, even the best technology can fail if it isn’t applied in the right way.

ORTENGA helps startups find the markets and applications where underperforming products can succeed. From system-level design to hardware, software, and RF expertise, we unlock hidden revenue streams and turn stalled investments into real ROI.

Don’t wait until it’s too late—discover where your technology truly belongs and make it sell.

When Robots Think, Cities Move

From semiconductor fabs cleaner than any hospital OR to NASA rovers exploring distant planets, robots and drones are reshaping what’s possible. In Smart Cities, they inspect, deliver, and protect—working where humans can’t.

With power, awareness, navigation, and command execution, boosted by AI, these autonomous systems excel. ORTENGA designs the full stack—architecture, antenna, ASIC, and algorithm—turning bold ideas into real-world solutions.

Why Statement of Work Alignment Determines Startup Execution Success

Startup Challenge Series

Every startup faces a sequence of execution challenges as it moves from vision to product to market. While these challenges are often discussed in terms of technology and funding, one of the most underestimated risk factors is misalignment at the Statement of Work (SoW) level.

ORTENGA addresses this gap by using the SoW as a strategic tool to manage startup risk across all three Startup Challenges.

Startup Challenge #1: Vision, Use Cases, and Problem Definition

Early-stage startups often articulate a compelling vision but lack full clarity on the system-level root causes behind the problem they are trying to solve. As a result, founders may verbally describe symptoms and expected outcomes without fully understanding the technical, regulatory, or integration implications.

This misalignment frequently appears during initial SoW drafting—where what is said differs from what is expected in deliverables.

Through structured discovery and SoW redlining, ORTENGA helps founders translate vision into technically grounded, outcome-oriented deliverables. This ensures the SoW reflects what truly needs to be solved, not just what appears urgent.

Startup Challenge #2: Engineering Leadership, Commitment, and Execution Risk

As startups commit resources, timelines, and capital, ambiguity becomes a material risk. An imprecise SoW can lock a company into incomplete assumptions, leading to rework, delays, or missed milestones.

ORTENGA uses the SoW re-drafting process as a risk-reduction mechanism, clarifying scope, ownership, and success criteria before execution begins. When ORTENGA leads both problem discovery and solution implementation, expectations become measurable, realistic, and aligned with execution capacity.

This alignment protects leadership teams from execution surprises and enables confident decision-making.

Startup Challenge #3: Silicon-to-System Integration and Outcome Delivery

The final challenge is translating engineering effort into system-level outcomes—performance, compliance, manufacturability, and market readiness.

Outcome-based SoWs explicitly tie deliverables to measurable results rather than activity-based tasks. This is especially critical in regulated and high-complexity markets such as automotive, aerospace, SATCOM, and radar, where failure to meet system requirements can delay or derail commercialization.

By aligning SoWs to outcomes, ORTENGA ensures that engineering investment directly supports product readiness and business objectives.

The ORTENGA Perspective

At ORTENGA, the Statement of Work is not a contractual formality—it is a strategic execution framework. Redlining and re-drafting are essential steps to align vision, execution, and outcomes across all startup stages.

A clear, mutually agreed SoW becomes the first measurable signal of a startup’s readiness to execute—and a critical foundation for long-term success.

Risk Calculation by Startup Founders: Why SoW, Audits, and Early Validation Matter

When I started my consulting practice—and later built an engineering network—I was forced to make uncomfortable decisions early on. Almost all of them came down to the same tradeoff: time versus hard cash.

What experience quickly taught me is this:

Cash can be replaced. Lost time cannot.

Once time is spent, it’s gone forever—no pivot, no follow-on round, and no technical hire can bring it back.

For startup founders, every dollar committed should be treated as a risk decision. But risk is often miscalculated. Many teams focus only on how much they spend, not what that spend buys them.

This is where Statements of Work (SoWs), technical audits, and early design validation quietly become some of the highest-leverage decisions a founder can make.

SoW Decisions: Risk Is Hidden in Ambiguity

An imprecise or misaligned SoW doesn’t just waste money—it wastes time.

Unclear deliverables, misinterpreted requirements, and bottoms-up execution paths force teams into rework cycles that surface months later, when course correction is most expensive.

A well-constructed SoW reduces risk by:

- Aligning technical execution to business outcomes

- Preventing scope drift before it becomes institutionalized

- Making assumptions explicit—early, not at tape-out or field test

Audits: Paying to See Reality Earlier

Technical audits are often viewed as a “nice-to-have” or something to do when problems appear. In reality, audits buy time by revealing misalignment before it compounds.

An early audit can surface:

- Architectural mismatches between antenna, ASIC, and algorithms

- Performance risks that won’t show up until late validation

- Hidden dependencies that quietly constrain roadmap flexibility

Not performing an audit doesn’t eliminate risk—it simply delays when you discover it.

Early Design Validation: The Cheapest Time to Be Wrong

The cheapest moment to be wrong is early.

Validation at the concept and architecture stage allows founders to make decisions when changes are still reversible.

Skipping early validation often leads to:

- Over-engineering the wrong solution

- Chasing specifications instead of use cases

- Discovering system-level issues only after schedules are locked

Early validation converts capital into clarity, and clarity protects time.

The ORTENGA Lens: Risk Reduction Is the Product

At ORTENGA, we work with founders who understand that execution risk—not technology alone—is what kills startups.

Our SoW structuring, design audits, and early validation engagements are designed to:

- Reduce irreversible time loss

- Replace assumption-driven execution with system-level alignment

- Ensure antenna, ASIC, and algorithm decisions support the same outcome

The goal isn’t to spend more.

The goal is to spend earlier where it saves months later.

For founders, the real risk isn’t investing in clarity—it’s discovering too late that time was the most expensive line item all along.

Before You Invest: Why Most Deep-Tech Startups Fail at Product Definition

Capital Moves Early—Product Definition Comes Too Late

I’ve seen this pattern play out many times.

A startup develops a promising new technology—novel, proprietary, and genuinely innovative. The technology has potential across multiple verticals. A VC sees one compelling product opportunity enabled by that technology and funds the company around it.

From there, a subtle but critical shift happens.

The startup defines the end product based on what the technology can do, not on what the market actually needs. The product roadmap becomes technology-driven rather than market-driven. Key performance metrics, system constraints, integration realities, and customer expectations are either underestimated or overlooked entirely at the conception stage.

Years pass. Millions of dollars are spent.

The product finally reaches the market—and it misses.

Missing features. Performance gaps. Misaligned assumptions. Problems that cannot be fixed with incremental tweaks.

At that point, consultants are brought in under various titles to “fix” the product. But it’s too late to return to the drawing board. The consultant’s first task becomes reconstructing why certain design decisions were made—often without access to the original founders, rationale, or constraints. This alone can take months, sometimes years.

Only after that forensic effort can the root cause be identified and presented back to investors:

the product was misdefined from day one.

The outcome is almost always the same:

- Stop the losses

- Wind down the company

- Salvage whatever value exists in patents or IP for use elsewhere

This failure mode is avoidable.

ORTENGA works with investors before capital is deployed—to align technology, product definition, and market requirements from the start. We help ensure that:

- The right technology is selected for the right market

- Product roadmaps are driven by system-level and market-level needs

- Critical performance metrics are validated early, not discovered too late

Partner with ORTENGA before you invest, and reduce the risk of funding a technically impressive—but commercially misfit—product.

The Path of Least Resistance in Product Design

Why founder instinct—without system and market context—leads to product misfit and lost ROI

Water flows downhill along the path of steepest descent—the shortest route from higher elevation to lower elevation.

Electrons flow to ground along the path of least resistance—from higher electrical potential to lower potential.

Many startup founders follow the same pattern when facing hard decisions at the very beginning of a high-tech product journey.

Under pressure, with limited time and capital, founders often rely on instinct during product conception and definition. This is natural. In most life situations, trusting instinct is a strength.

But in new product design, instinct is often the wrong guide.

Early product decisions demand a higher vantage point—a system-level view. What does the market actually need? What system will this product live in? What constraints, interfaces, and performance metrics already exist?

This kind of thinking feels unnatural because it resists the easiest path. It forces founders to step outside their own technology, preferences, and assumptions. Yet this discipline can save years of effort and millions of dollars downstream—and dramatically improve the odds of real return on investment.

When a product is defined purely by founder instinct—without understanding the system or market it must fit into—the result is predictable: product misfit. The technology may function, but it does not belong. It fails not because it was poorly engineered, but because it was never designed for the system in the first place.

Nature rewards least resistance.

Markets reward alignment.

Before you optimize execution, validate the system.

Many product failures are not engineering failures—they are definition failures made early, when instinct replaced system-level thinking. ORTENGA works with founders and executives at the product conception stage to audit assumptions, align system requirements, and ensure new products are designed for the market they must live in—not just the technology behind them.

If you’re defining a high-tech product in antennas, ASICs, or algorithms, engage ORTENGA early to reduce execution risk, avoid product misfit, and protect your time and capital.

→ Talk to ORTENGA before you commit to a design path

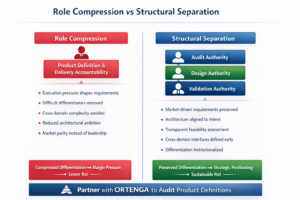

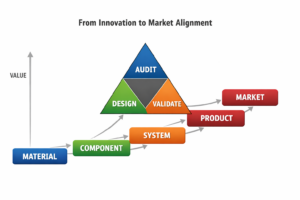

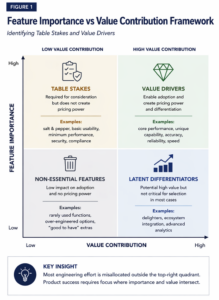

Why Most Products Fail Before Engineering Begins

Why Auditing the Technical Plan Before Design Reduces Investment Risk

Most products don’t fail because engineering execution is weak—they fail because no one audited the technical plan before design decisions locked in irreversible cost, risk, and misalignment with the market.

Technical product design and development is inherently challenging. Many organizations fail not because of poor execution, but because they never define a viable product concept—one with clear technical requirements, realistic market constraints, and a credible path to return on investment.

ORTENGA Engineering Risk & RoI Blueprint

Successful products require three distinct engineering functions, as defined by the ORTENGA Engineering Risk & RoI Blueprint:

- Audit — Product Concept and System-Level Technical Definition

Establishes what must be built, why it matters to the market, and which technical requirements govern success. This function converts product vision into an auditable system-level technical blueprint, exposing technical, market, and investment risk before design begins. - Design — Engineering Architecture and Tradeoffs

Translates the audited system-level definition into architectures, trade studies, and detailed designs. Design decisions are made with confidence because the product definition has already been independently audited. - Validate — Engineering Development and Execution

Implements, integrates, and validates the product against the original audited intent. Validation ensures that what is built truly meets the technical objectives, market needs, and investment assumptions before scale, tapeout, or deployment locks in cost and timeline.

Technical leadership must clearly understand the role of each function, enforce proper separation between them, and allocate the right resources at the right time. When these boundaries blur—or when design begins before the technical plan has been audited—risk compounds and product viability erodes.

This level of orchestration demands a technical leader with broad system insight, market awareness, and execution discipline. Many organizations, especially startups, do not have this capability in-house.

For startups building a single, mission-critical product, the consequences are amplified. Their success—or failure—depends entirely on getting the product definition right before committing significant time and capital.

Why This Matters for Technical Leadership

Technical leadership is not about managing engineers—it is about protecting the product and the investment behind it.

Leaders who skip early audits often discover fundamental issues only after design and development are underway, when fixes are expensive and schedules are immovable. Leaders who apply the ORTENGA Engineering Risk & RoI Blueprint make fewer assumptions, allocate capital more effectively, and give engineering teams a clear, stable target.

Only technical leadership mindful of these realities should lead engineering organizations—especially startups whose future depends on a single product.

Partner with ORTENGA

ORTENGA partners with founders, executives, and investors at the point where leverage is highest: before engineering begins.

By applying the ORTENGA Engineering Risk & RoI Blueprint, organizations:

- Reduce execution and investment risk

- Align engineering with real market needs

- Preserve capital and timeline

- Build the right product before building it right

Partner with ORTENGA to bring disciplined technical leadership to your product design and development—so risk is addressed early and return on investment is protected from day one.

Recruiting vs. Partnering: A Time-to-Market Decision Framework

The Cost of Inaction: When Recruiting Delays Destroy RoI

ORTENGA helps high-tech teams audit execution risk early and convert stalled hiring into predictable product delivery.

Every unfilled engineering role quietly compounds risk. While teams wait for the “right” hire, schedules slip, design decisions stall, capital burns, and competitors move. The real danger isn’t the open position itself—it’s the assumption that waiting is free. In high-tech markets where timing defines winners, delayed recruiting becomes a strategic decision with measurable cost. This is the moment leaders must shift perspective: not how long to keep recruiting, but when to stop waiting and start executing.

In today’s era of digital communication, high-tech startups often pursue narrow, high-value market segments that demand highly specialized engineering skills. Recruiting for these roles is rarely fast—and in many cases, not realistic within product timelines.

Delayed hiring carries real consequences: slower time-to-market, erosion of return on investment (RoI), and in some cases, a missed market window entirely as competitors move faster.

At some point, a leadership decision must be made:

continue recruiting, form a partnership, or outsource the work altogether.

The Cost of Inaction

Inaction is not neutral—it has a cost. When that cost is quantified (lost revenue, delayed milestones, opportunity risk), the answer often points directly to a partnership or outsourcing model.

Partnerships offer a shared burden: both parties contribute engineering depth, execution discipline, and accountability. This is fundamentally different from continued internal recruiting, where all schedule and execution risk remains in-house.

The 3–6 Month Rule

Across many high-tech disciplines, an unfilled role follows a predictable pattern:

- Up to ~3 months: Reasonable recruiting window

- Beyond 6 months: The role is unlikely to be filled

If it takes more than six months to recognize this, it usually signals one of two realities:

- The role is not critical — the organization is functioning without it.

- The job description is unrealistic — easily tested by asking whether similar roles exist elsewhere and are being filled.

When the second case is ruled out, what remains is often the first: the role is important, but not important enough to justify continued delay.

The Strategic Alternative

At that point, the rational path forward is partnership or outsourcing.

Many successful companies choose partnerships because they recognize a hard truth:

it is neither cost-effective nor timely to organically build elite engineering teams across every discipline. They also understand that subtle engineering judgment and execution quality are what differentiate products in the market—making the choice of partner critical.

Where ORTENGA Fits

ORTENGA provides access to an elite Antenna, ASIC, and Algorithm engineering network that augments internal teams through carefully drafted, execution-driven Statements of Work (SoW).

The result is not just capacity—but momentum, predictability, and preserved RoI when timing matters most.

From Technology to Product: Choosing the Application That Matters

How ORTENGA Audits, Designs, and Validates Products Before Capital Is Committed

Every first-time founder believes their technology will win. That confidence is necessary—but it’s also where the most expensive mistakes begin. The real risk isn’t engineering execution; it’s choosing the wrong application, locking in the wrong requirements, and discovering too late that the market wanted something else. ORTENGA steps in before that happens—auditing the technical plan, designing the system with market reality in mind, and validating the product before capital and momentum are irreversibly committed.

Before a product is designed—or a roadmap is locked—the only rational first step is an Audit: a system-level examination of the application choice, technical assumptions, market constraints, and risk drivers that will ultimately determine whether the product deserves investment at all.

AUDIT — Define the Right Product

The Audit phase exists to answer one question before anything else: is this the right product to build at all?

This is not a design review and not a feature discussion. It is a disciplined evaluation of the system as a whole—how the technology maps to a real application, how the market values performance, and where hidden risks are already embedded.

ORTENGA’s Audit surfaces misalignment early, when change is still inexpensive and strategic options are still open. Many startups skip this step and move directly into design, only to discover later that they optimized the wrong metrics for the wrong customer.

During an Audit, ORTENGA examines:

- Application-market fit and willingness to pay

- System-level success metrics and constraints

- Implicit technical and integration assumptions

- Competitive alternatives and substitution risk

- Feasibility within realistic cost, power, schedule, and talent limits

Most startups don’t fail in execution—they fail the moment they commit to the wrong product definition.

DESIGN — Engineer the Right System

Once the product definition is audited and validated as worth pursuing, Design begins. This phase translates system-level intent into engineering reality.

Design is where trade-offs are made permanent. Architecture choices, performance allocations, interfaces, and cost structures all emerge here. Without a strong Audit, these decisions are often driven by intuition or convenience rather than market necessity.

ORTENGA’s Design discipline ensures that:

- Requirements trace directly back to market value

- Architecture choices support scalability and integration

- Performance is optimized where it actually matters

- Cost, power, and complexity remain controlled

Design is not about building more—it is about building only what is justified.

VALIDATE — Prove It Will Win

Validation is where assumptions meet evidence. This is not just testing functionality; it is proving that the product can meet its promised performance, integrate into its intended ecosystem, and compete under real-world conditions.

Many teams validate too late, after sunk cost limits their ability to change direction. ORTENGA’s approach validates early enough to protect RoI.

Validation focuses on:

- Technical feasibility against real requirements

- Performance verification tied to customer value

- Integration readiness with partner or platform ecosystems

- Confirmation that the product can scale economically

A product that cannot be validated early is not ready for full investment.

Closing Perspective